LLMs and reasoning

Multi-level Pattern Matching and why better sampling seems to be a low hanging fruit right now

Update:

I now think this should be two articles - one on the claims from GSM Symbolic about LLMs doing only pattern matching - the other one on the sampling techniques.

Multi-level Pattern Matching

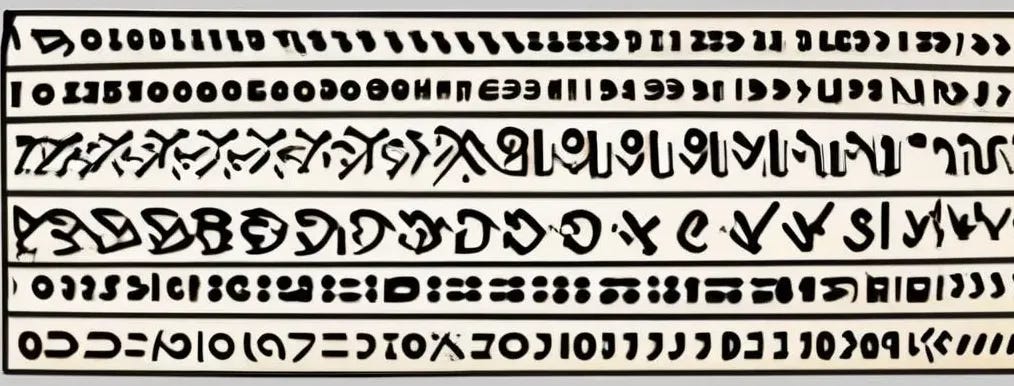

IMHO LLMs primarily integrate strings into complex patterns. This process happens on many levels where texts (or rather some internal representation of texts) computed earlier are later fit into higher level patterns. A good example of that is Chain of Thought - which has the higher level pattern of thinking step by step and lower level patterns of the individual steps. This is Linearized subgraph matching described in Faith and Fate: Limits of Transformers on Compositionality, but I would rather call it Multilevel pattern matching (the equivalence here is like that from Kleene’s Theorem). Multilevel is a kind of unnecessary here - as standard regular expressions have grouping operations - but I add it to show that it happens in multiple steps. A good explanation of that paper you can find at the answer.ai blog.

This might sound like the stochastic parrot criticism of LLMs and some argue that LLMs do pattern matching instead of reasoning. I think reasoning can emerge from pattern matching just like universal computation arises from a short set of rules and empty tape.

But it can be a very fragile process.

Distractions

One failure case is when LLMs patterns that represent unrelated thoughts are mixed together causing semantic leakage:

when given the prompt “He likes yellow. He works as a”, GPT4o1 generates the output “school bus driver”

Here, although the probability distribution is unexpectedly altered, the resulting answer remains valid. But in GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models authors provide examples where it leads to an answer they consider wrong. Yet the examples they provide don’t seem entirely fair:

Oliver picks 44 kiwis on Friday. Then he picks 58 kiwis on Saturday. On Sunday, he picks double the number of kiwis he did on Friday, but five of them were a bit smaller than average. How many kiwis does Oliver have?

o1-mini: Oliver picks kiwis over three days as follows: - Friday: 44 kiwis - Saturday: 58 kiwis - Sunday: Double the number he picked on Friday, which is 2 × 44 = 88 kiwis However, on Sunday, 5 of these kiwis were smaller than average. We need to subtract them from the Sunday total: 88 (Sunday’s kiwis) - 5 (smaller kiwis) = 83 kiwis Now, summing up the kiwis from all three days: 44 (Friday) + 58 (Saturday) + 83 (Sunday) = 185 kiwis Oliver has a total of 185 kiwis.

o1 treats the question as if it was asked in good faith and tries to incorporate all the information from it into the answer, it's likely that an unsuspecting human might approach the problem similarly. Only in a strict mathematical mode humans try to find an answer that logically flows from the prerequisites but accept that not all of them must be incorporated. In normal conversations we expect that people give us information so that we can incorporate it into the answer and we often treat that rule as more important than strict logic. But even when doing mathematical tests humans can still be distracted in similar ways (see for example this comment from a college teacher).

Still I guess in other cases this might lead to more obvious errors.

It is worth noting that it seems that adding a simple warning in the prompt about the questions being tricky is enough to fix most of the errors.

Other failures

Distraction is not the only failure mode. Other examples suggest that LLM fails to reason correctly if the authors changed names of the characters used in the questions, or numbers or other not important details, as if LLMs associated the correct reasoning with a particular wording. Yet other examples are about complicating the questions by adding more steps to the reasoning - that also leads to errors.

The paper Evaluating LLMs’ Mathematical and Coding Competency through Ontology-guided Interventions provides another comprehensive catalogue of potential prompt modifications, highlighting various ways perturbations can lead to errors.

Failures and multi-level pattern matching

These failures show that LLMs do pattern matching - but they don’t show that they cannot reason - because reasoning can emerge from pattern matching.

The error frequency tend to increase with complexity. This is also compatible with the multi-level pattern matching model - that additional complexity requires additional alignment operations that can go wrong with non-zero probability.

Sampling for mitigation

Chain-of-Thought Reasoning Without Prompting reveals that LLMs can generate multiple alternative reasoning paths. By retrieving more than one of the most probable tokens during sampling, we can access these alternatives. This works even if we do that top-k sampling only for the first token - because the alternatives often differ at once at the first token. Among these alternatives there are often reasoning paths that eventually get a higher confidence score than the one associated with the token that got the highest probability at the start.

Entropix tries to improve on that by using various measures like entropy and varentropy of the logits and attention blocks. The optillm GitHub repository explores CoT and Entropic decoding methods, among others, for enhancing reasoning capabilities. The authors suggest that the additional computations in Entropics do not improve the results in comparison with the simple CoT decoding (but I am not sure if they also check efficiency - conditional branching could spare some computations).

Further testing would be valuable to determine how these techniques could improve outcomes for various failure types and mitigate semantic leakage.

The end game here is probably a trainable sampling block as too many elements can impact the sampler for a classical algorithm. Machine learning could offer an optimal solution here.

Structured prompts

By decoupling the sampling mechanism from the main LLM, we can enhance its functionality with additional data inputs. For instance, we could incorporate a pattern recognition system for locating the final answer within the complete output. This approach allows the sampling block to determine the confidence score of the answer itself, which provides a more accurate evaluation than assessing the entire output, potentially filled with numerous reasoning steps.

I am an advocate of structured prompts for other reasons too.

Updates:

another article on better sampling: Self-Consistency Improves Chain of Thought Reasoning in Language Models - it selects k reasoning paths - then chooses the answer that is the conclusion of the biggest number of paths

a better worded article about the distracting information examples not being fair: Misinterpreting LLM 'Errors': How Experiment Design, Not AI Reasoning, Leads to False Conclusions

more on limitations of LLM “reasoning”: https://github.com/GoodAI/llm-chained-ops

critique of the Entropix approach: https://news.ycombinator.com/item?id=41947852